Why A Green Tick Is Not The Same As Approval

Understanding why system-approved passport photos can still be rejected

I recently came across an entertaining read on Reddit and it got me thinking about the automated systems that a lot of retail stores are using these days.

There’s something quietly reassuring about a system that won’t let you fail. Stand here, look straight, wait for the green ticks, and only when everything lines up are you allowed to proceed. In a world where most things feel uncertain, that kind of structure feels like control. It feels like certainty. And when it comes to something as important as a passport photo, people want exactly that.

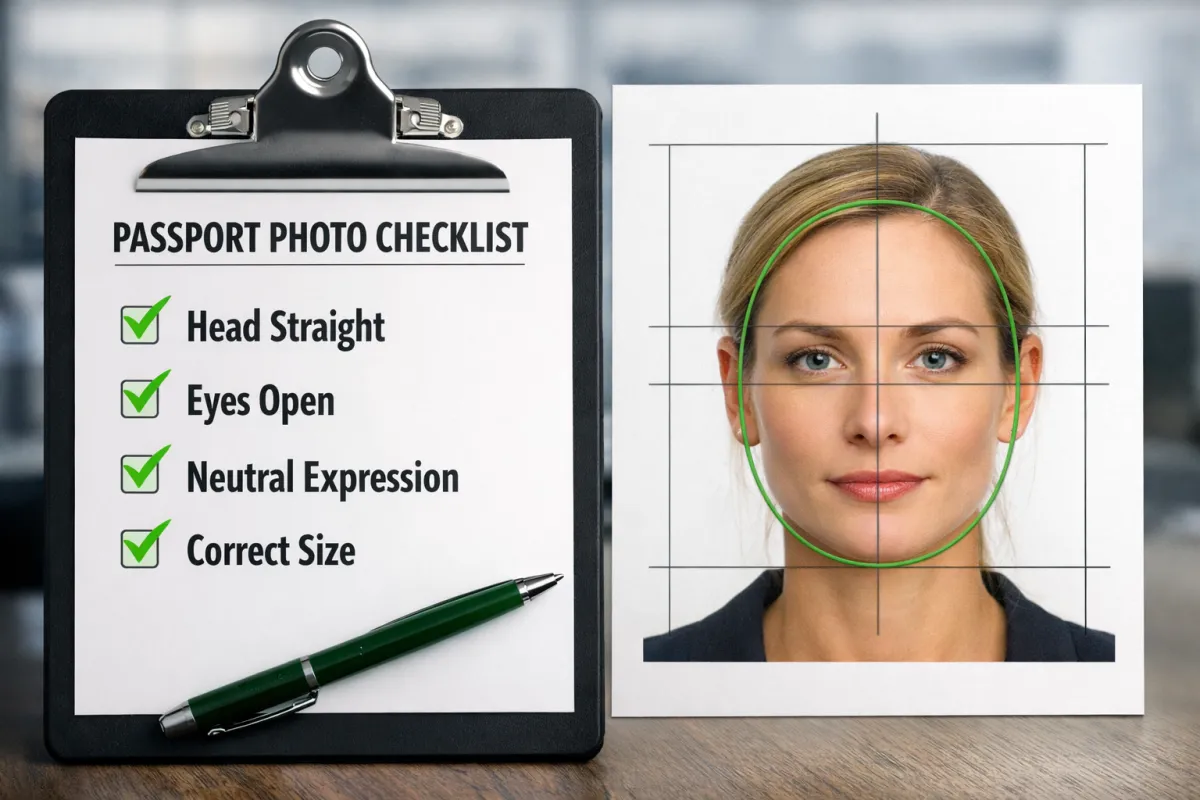

Over the past decade, retail based passport photo systems, particularly those deployed through large retail organisations, have been built on that promise. The camera connects directly to software that evaluates the image in real time. Your head must sit within a defined space. Your eyes must land within a prescribed zone. Your face must be straight, centred, and free from obstruction. If any of those conditions are not met, the system simply refuses to take the photo. It’s a neat solution to a messy problem.

And on the surface, it works. We've all heard of facial recognition software and most of us use it daily to unlock our phones.

For automated passport photo systems the logic is simple enough. The Photo standards come from the ICAO and the passport authority, so if the software enforces those standards, then the outcome should be compliant. The machine becomes the gatekeeper. It removes subjectivity. It eliminates guesswork. It replaces human inconsistency with digital precision.

But that assumption only holds if the system is evaluating everything that matters.

And it cant. There are always more matters than just the standards.

Where Automated Compliance Reaches Its Limits

The uncomfortable truth is that the software does exactly what it was designed to do. The problem is not that it fails. The problem is that its scope of the software is limited, to, well the software. It can only measure what it has been programmed to measure, and passport photos are not approved solely on what can be measured.

There are a couple of moments of truth in the passport photo approval journey. The first is when it is captured and produced. The second is when it is reviewed by the issuing authority. Most would assume these are governed by the same rules. But with automated systems in play, they simply are not.

At the moment of capture, the automated system is checking geometry. Proportions. Alignment. Basic thresholds. It is making sure you haven’t tilted your head, that your eyes are open, that your face sits within the frame. These are all important, and without them, a photo would almost certainly be rejected. In that sense, the system is doing its job. It is preventing obvious mistakes.

But when the image leaves that environment and arrives at the point of assessment, the criteria in reality change. The question is no longer “does this meet the automated checklist,” but “does this image hold up under scrutiny of the issuing authority's standards.” And that is a very different question.

Lighting is where this gap becomes most visible. Not lighting in the broad sense, not whether the face is visible, but the quality of that light. Whether it falls evenly across both sides of the face. Whether shadows sit subtly under the chin or creep too far across the jawline. Whether the light is clean, or contaminated by a mix of daylight and artificial sources. These are not things any automated system is particularly good at judging, because they are not easily reduced to simple rules.

A camera can detect extremes. And software can identify when something is obviously wrong. But software is binary and it struggles with nuance. And nuance is often where acceptance or rejection is decided.

What makes this more interesting is that the limitation isn’t just technical. It’s physical. The environments these automated systems operate in were never designed to be photographic spaces. They are counters, corners of retail stores, areas exposed to windows, reflections, movement, and inconsistency. Every location is slightly different. Most setups are often compromised.

The developers who have been involved in rolling these systems out have spoken about this challenge in practical terms. Finding a place to mount the camera. Dealing with ambient light from a west-facing window in the afternoon. Adjusting for the fact that one store might have a controlled backdrop while another is dealing with spill light from an open entrance. These are not edge cases. They are everyday realities. And when a system designed for consistency is placed into an environment that is inherently inconsistent, something has to give.

Sometimes the workaround is as simple as suggesting the customer come back at a different time of day. It sounds harmless enough, even helpful if inconvenient. But it reveals something fundamental. If the outcome changes depending on the position of the sun, then the process is not controlled. And if it is not controlled, then it cannot be relied upon to produce the same result every time.

Why a Green Tick Is Not the Same as Approval

This is where the idea of “system approval” starts to unravel. Not dramatically, not in a way that suggests the system is broken, but in a quieter, more qualitative way. The system is doing what it can. It is just not doing everything.

And that distinction matters most when things go wrong.

Because when a passport photo is rejected, it is rarely because the subject was wildly out of position or obviously non-compliant. It is usually because of something more subtle. Lighting that wasn’t quite even. A shadow that was just a little too strong. A tonal imbalance that affected how the face was perceived. Things that sit just outside the reach of a checklist.

For the person having their photo taken, this can feel confusing. The system said it was fine. The operator followed the process. Everything appeared correct. And yet the image was still declined, or worse still, the customer is very unhappy with the results.

We regularly see the outcome of this disconnect in our studio. Clients arrive with a “green tick approved” passport photo taken elsewhere, only to have had it rejected by the issuing authority or worse still rejecting the result themselves as the final image is simply "horrid".

The frustration is often twofold. First, the inconvenience of rejection itself, especially when time matters. And second, the realisation that they now need to go through the process again, having already paid for a photo that, on the surface, appeared to meet every requirement.

What has become clear is that these systems are not designed to guarantee success. They are designed to reduce failure. They catch the big mistakes. They streamline the process. They allow a high volume of people to move through quickly and with a reasonable degree of confidence.

But they operate within boundaries.

Even the people who built them understand that. The constraints of the environment, the limitations of what can be measured, the reliance on threshold-based decisions rather than judgement, these are not hidden flaws. They are known conditions. The system works, but it works within a defined scope.

The difficulty arises when that scope is mistaken for completeness.

Because the final decision about a passport photo is not made by the system that captures it. It is made later, by a reviewing authority, applying a broader and more interpretive standard. The image has to stand on its own, outside the environment it was taken in, outside the logic of the software that approved it.

And that is where the difference lies.

The system can tell you that you’ve met the minimum requirements. It cannot tell you that your photo will be accepted. It simply offers you a green tick.

If you need your passport photo to be done right the first time, it is worth understanding that compliance is only part of the equation. As any experienced photographer knows, the real difference is almost always in the lighting, and in the quality of that light.